NestGSV Wearables Hackathon and Speak Easy

This weekend, NestGSV, AT&T, Vuzix, and a few other companies hosted a wearables hackathon in Redwood City. Having checked out the sponsors ahead of time, I became very interested with the Vuzix Smart Glasses. It was going to be the centerpiece of my hack.

In fact, this was nearly the perfect platform to build my hack on.

Public Speaking

Having spoken in front of a number of audiences, I have learned a quite a number of tips and tricks that public speakers use to engage audiences and to make their presentations more memorable. However, as a human being, I still forget them when I get up on stage.

When speakers talk in front of an audience, some (lots?) get nervous. Some even freeze up, where you can’t even start the presentation off right. Then you might forget critical points in your presentation that you kick yourself afterwards for forgetting. And if that wasn’t enough, you’re suppose to make eye contact with your audience, vary your attention to different people, and move about the stage without pacing. Got it? Good!

I could go into more tricks and tips, but that could take all day, literally.

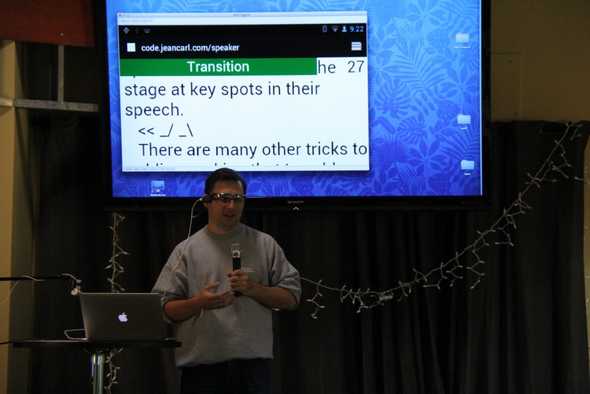

Speak Easy

Speak Easy is a heads up display using the Vuzix M100 Smart Glasses. After loading your speech through the website, visit Speak Easy on your M100. When you’re ready, you tilt your head up and Speak Easy will begin scrolling through your script.

If at any point you need to stop, tilting your head up will stop the scrolling. Tilting your head up again will resume the scrolling.

Now you can read your script and have a head start to being a great public speaker.

However, you might end up standing in the same position, looking at the same side of the room while you speak. Speak Easy will monitor your head and body movements.

If Speak Easy senses the position of your head is stationary, you might be focusing on one person or one side of the room. An unobtrusive colored bar will display at the top of the screen alerting you to alter your point of view.

If Speak Easy senses your body is stationary, you might want to move about the stage a little more. An unobtrusive colored bar will display at the top of the screen alerting you to move about the stage. Just be careful, you don’t want to pace (another habit some speakers have).

This sounds pretty simple to master, but it is still hard to speak in front of an audience.

Lessons Learned

Vuzix supports the Android platform. Being a native Android developer, you shouldn’t have much difficulty in deploying your app onto their glasses. However, I wanted to build Speak Easy completely in HTML/JavaScript and within the browser. That proved to be more difficult. The problem wasn’t the browser. The browser is similar to the stock Android browser.

Accessing the accelerometer and getting accurate values was one challenge. Some of the events and values aren’ t accessible through Javascript or require some additional manipulation. Browsers are notorious for their lack of access to hardware and cross-browser standards. Some events worked on my Android phone, but didn’t on the smart glasses. I found that nodding up and down was the most accurate value.

Keyboard input is very hard, if not, nearly impossible with just the glasses. I ended up using the Android Screencast on my computer connected to the glasses to type in the URL of my app. From my understanding, keyboard input is mainly supported by bluetooth connected devices.

As with all wearable glasses, the right angle and fit is important. The M100 is slightly off balance which results in movement happening easily. I would probably scrap the “safety glasses” frame for something a little sturdier.

Vuzix

As for the Vuzix Smart Glasses, they are pretty good for their first version. The screen can be tilted up and down, and the arm can be bent for the right alignment horizontally.

The controls include a front and back toggle button with a third single button towards the back of the unit. The front toggle button traverses menus down or app icons to the right. Long pressing this button also brings up a contextual menu. The back toggle button does the reverse. And the rear button is used for selection.

The app menu is surprisingly good looking and is relatively easy to navigate to various apps. The unit does get warm against the head during extended uses. And really noticable at first, the screen and arm block a portion of your visibility. Google Glass, a competitor to Vuzix, doesn’t impede the view of the wearer, instead sitting above eye level. The arm was too firm to test if the screen could be moved higher, without fear of it breaking.

As for taking pictures with the M100, it had its pros and cons. It was always ready to take a picture. However, only a portion of the view was shown on the screen. I didn’t have enough time to understand what part of the overall photo was being previewed. For the fidgety type, pictures blur so a steady head is best.

AT&T

I was finally able to understand AT&T’s Text-to-Speech API and utilize it.

Speak Easy uses their Text-To-Speech API to verbally announce how much time is remaining, at 30 seconds intervals. This is a good reminder for those who get caught up with what they are speaking about and don’t look at the timer in the top right corner of the screen.

What’s next?

Speak Easy could be extended to help speakers with a number of other issues, including whether your volume is adequate, bullet notes instead of speeches, and controlling slides.